CERN

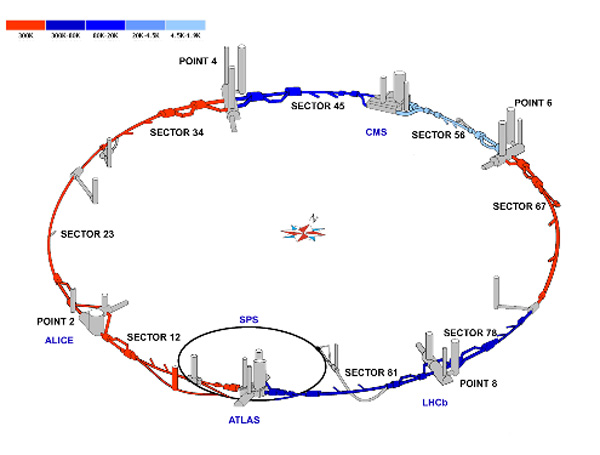

The Large Hadron Collider is mostly

underground, as shown by the circle on this aerial view of the site on the

French-Swiss border.

The Future of Physics

Scientific American Magazine, February 2008

Edited by Andy Ross

The terascale is the realm of physics that comes into view when two

elementary particles smash together with a combined energy of around a

trillion electron volts, or one TeV. The machine that will take us to the

terascale — the ring-shaped Large Hadron Collider (LHC) at CERN — is now

nearing completion.

To ascend through the energy scales from electron

volts to the terascale is to travel from the domains of chemistry and

solid-state electronics (electron volts) to nuclear reactions (millions of

electron volts) to the territory that particle physicists have been

investigating for the past half a century (billions of electron volts).

What lies in wait for us at the terascale? No one knows.

LHC cooldown status as of February 23, 2008

The Large Hadron Collider

Edited by Andy Ross

The Large Hadron Collider (LHC), a short drive from Geneva, will peer into

the physics of the shortest distances and the highest energies ever probed.

For a decade or more, particle physicists have been eagerly awaiting a

chance to explore the terascale domain. Significant new physics is expected

to occur at these energies, such as the elusive Higgs particle (believed to

be responsible for imbuing other particles with mass) and the particle that

constitutes the dark matter that makes up most of the material in the

universe.

The mammoth machine, after a nine-year construction period,

is scheduled to begin producing its beams of particles later this year. The

commissioning process is planned to proceed from one beam to two beams to

colliding beams, from lower energies to the terascale, from weaker test

intensities to stronger ones suitable for producing data at useful rates.

Each step will produce challenges for the more than 5,000 scientists,

engineers and students collaborating on the effort. The particle physics

community is eagerly awaiting the first results from the LHC.

To

break into the new territory that is the terascale, the LHC's basic

parameters outdo those of previous colliders in almost every respect. It

starts by producing proton beams of far higher energies than ever before.

Its nearly 7,000 magnets, chilled by liquid helium to less than two kelvins

to make them superconducting, will steer and focus two beams of protons

traveling within a millionth of a percent of the speed of light. Each proton

will have about 7 TeV of energy — 7,000 times a proton rest mass. When it is

fully loaded and at maximum energy, all the circulating particles will carry

energy roughly equal to the kinetic energy of about 900 cars traveling at

100 kilometers per hour.

The protons will travel in nearly 3,000

bunches, spaced all around the 27-kilometer circumference of the collider.

Each bunch of up to 100 billion protons will be the size of a needle, just a

few centimeters long and squeezed down to 16 microns in diameter (about the

same as the thinnest of human hairs) at the collision points. At four

locations around the ring, these needles will pass through one another,

producing more than 600 million particle collisions every second. The

collisions, or events, will occur between the quarks and gluons making up

the protons. The most cataclysmic of the smashups will release about 2 TeV.

Four giant detectors — the largest would roughly half-fill the Notre

Dame cathedral in Paris, and the heaviest contains more iron than the Eiffel

Tower — will track and measure the thousands of particles spewed out by each

collision occurring at their centers. Despite the detectors' vast size, some

elements of them must be positioned with a precision of 50 microns.

The nearly 100 million channels of data streaming from each of the two

largest detectors would fill 100,000 CDs every second. So instead of

attempting to record it all, the experiments will have what are called

trigger and data-acquisition systems, which act like vast spam filters,

immediately discarding almost all the information and sending the data from

only the most promising-looking 100 events each second to the LHC's central

computing system at CERN for archiving and later analysis.

A farm of

a few thousand computers at CERN will turn the filtered raw data into more

compact data sets organized for physicists to comb through. Their analyses

will take place on a grid network comprising tens of thousands of PCs at

institutes around the world, all connected to a hub of a dozen major centers

on three continents that are in turn linked to CERN by dedicated optical

cables.

In the coming months, all eyes will be on the accelerator.

The final connections between adjacent magnets in the ring were made in

November 2007, and the eight sectors are now being cooled almost to the

cryogenic temperature required for operation. After the operation of the

sectors has been tested, first individually and then together as an

integrated system, a beam of protons will be injected into one of the two

beam pipes that carry them around the machine's 27 kilometers.

The

series of smaller accelerators that supply the beam to the main LHC ring has

already been checked out. The first injection of the beam will be a critical

step, and the LHC scientists will start with a low-intensity beam to reduce

the risk of damaging LHC hardware. Only when they have carefully assessed

how that pilot beam responds inside the LHC and have made fine corrections

to the steering magnetic fields will they proceed to higher intensities. For

the first running at the design energy of 7 TeV, only a single bunch of

protons will circulate in each direction.

As the full commissioning

of the accelerator proceeds in this measured step-by-step fashion, problems

are sure to arise. The big unknown is how long the engineers and scientists

will take to overcome each challenge. If a sector has to be brought back to

room temperature for repairs, it will add months. The four experiments —

ATLAS, ALICE, CMS and LHCb — also have a lengthy process of completion ahead

of them, and they must be closed up before the beam commissioning begins.

When everything is working together at the design luminosity, as many as

20 events will occur with each crossing of the needlelike bunches of

protons. As little as 25 nanoseconds pass between one crossing and the next.

Product particles sprayed out from the collisions of one crossing will still

be moving through the outer layers of a detector when the next crossing is

already taking place. Individual elements in each of the detector layers

respond as a particle of the right kind passes through it. The millions of

channels of data streaming away from the detector produce about a megabyte

of data from each event, a petabyte every two seconds.

The trigger

system that will reduce this flood of data to manageable proportions has

multiple levels. The first level will receive and analyze data from only a

subset of all the detector's components, from which it can pick out

promising events. This level-one triggering will be conducted by hundreds of

dedicated computer boards. They will select 100,000 bunches of data per

second for more careful analysis by the next stage, the higher-level

trigger.

The higher-level trigger will receive data from all of the

detector's millions of channels. Its software will run on a farm of

computers, and with an average of 10 microseconds elapsing between each

bunch approved by the level-one trigger, it will have enough time to

reconstruct each event. It will project tracks back to common points of

origin and thereby form a coherent set of data for the particles produced by

each event.

The higher-level trigger passes about 100 events per

second to the hub of the LHC's global network of computing resources — the

LHC Computing Grid. A grid system combines the processing power of a network

of computing centers and makes it available to users who may log in to the

grid from their home institutes.

The LHC grid is organized into

tiers. Tier 0 is at CERN itself and consists in large part of thousands of

commercially bought servers, both PC-style boxes and, more recently, blade

systems looking like black pizza boxes, stacked in row after row of shelves.

Computers are still being purchased and added to the system. The data passed

to Tier 0 by the LHC data-acquisition systems will be archived on magnetic

tape.

Tier 0 will distribute the data to the 12 Tier 1 centers, which

are located at CERN itself and at 11 other major institutes around the

world. Thus, the unprocessed data will exist in two copies, one at CERN and

one divided up around the world. Each of the Tier 1 centers will also host a

complete set of the data in a compact form structured for physicists to

carry out many of their analyses.

The full LHC Computing Grid also

has Tier 2 centers, which are smaller computing centers at universities and

research institutes. Computers at these centers will supply distributed

processing power to the entire grid for the data analyses.

The LHC

has experienced some setbacks along the way. Last March a magnet of the kind

used to focus the proton beams just ahead of a collision point (called a

quadrupole magnet) suffered a serious failure during a test of its ability

to stand up to the forces that could occur if its coils lost their

superconductivity (a mishap called quenching). Part of the supports of the

magnet had collapsed under the pressure of the test, producing a loud bang

like an explosion.

These magnets come in groups of three, to squeeze

the beam first from side to side, then in the vertical direction, and

finally again side to side, a sequence that brings the beam to a sharp

focus. The LHC uses 24 of them, one triplet on each side of the four

interaction points. At first the LHC scientists did not know if all 24 would

need to be removed from the machine and brought above-ground for

modification, a time-consuming procedure that could have added weeks to the

schedule. The problem was a design flaw. CERN and Fermilab researchers

worked feverishly, identifying the problem and coming up with a strategy to

fix the undamaged magnets in the accelerator tunnel.

In June, CERN

director general Robert Aymar announced that he had to postpone the

scheduled start-up of the accelerator from November 2007 to spring 2008. The

beam energy is to be ramped up faster to try to stay on schedule for doing

physics by July.

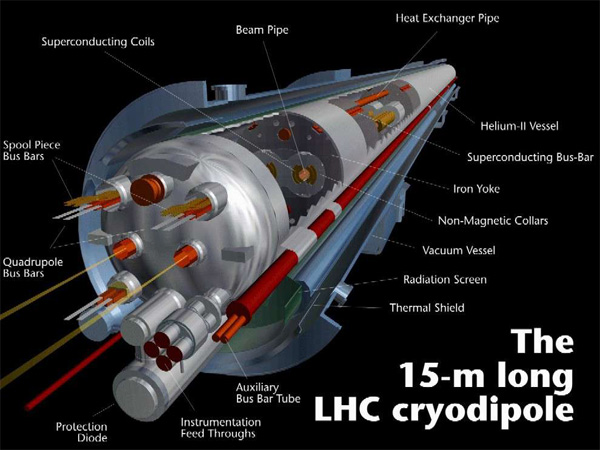

The LHC

Remote Operations Center

One of the

LHC cryogenic superconducting dipole magnets

A view along

the LHC main tunnel

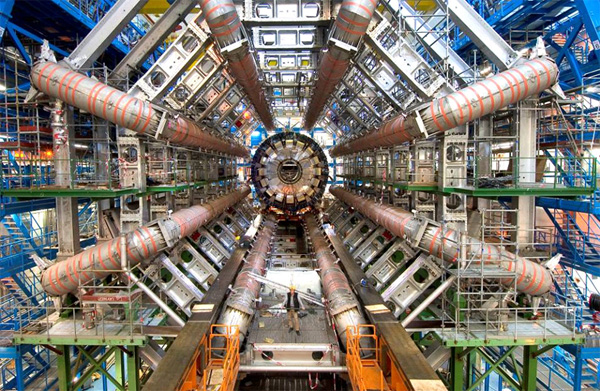

The LHC

ATLAS detector under construction, with an engineer in the foreground

The Coming Revolutions in Physics

Edited by Andy Ross

When physicists are asked to give a short answer to the question of why we

are building the Large Hadron Collider (LHC), we usually reply "Higgs" — the

Higgs particle, the last remaining undiscovered piece of our current theory

of matter. But the full story is more interesting. The new collider provides

the greatest leap in capability of any instrument in the history of particle

physics.

In this new world, we expect to learn what distinguishes two

of the forces of nature — electromagnetism and the weak interactions — with

broad implications for our conception of the everyday world. We will gain a

new understanding of simple and profound questions: Why are there atoms? Why

chemistry? What makes stable structures possible?

The search for the

Higgs particle is only the first step. Beyond it lie phenomena that may

clarify why gravity is so much weaker than the other forces of nature and

that could reveal what the unknown dark matter that fills the universe is.

Even deeper lies the prospect of insights into the different forms of

matter, the unity of outwardly distinct particle categories and the nature

of spacetime. The LHC will help us refine these questions.

The

Standard Model of particle physics can explain much about the known world.

The main elements of the Standard Model fell into place during the 1970s and

1980s. Yet even as the Standard Model has gained ever more experimental

support, a growing list of phenomena lies outside its purview, and new

theoretical ideas have expanded our conception of what a richer and more

comprehensive worldview might look like. Taken together, the continuing

progress in experiment and theory point to a very lively decade ahead.

Our current conception of matter comprises two main particle categories,

quarks and leptons, together with three of the four known fundamental

forces, electromagnetism and the strong and weak interactions. Gravity is,

for the moment, left to the side. Quarks, which make up protons and

neutrons, generate and feel all three forces. Leptons, the best known of

which is the electron, are immune to the strong force. What distinguishes

these two categories is a property akin to electric charge, called color.

Quarks have color, and leptons do not.

The guiding principle of the

Standard Model is that its equations are symmetrical. The equations remain

unchanged when you change the perspective from which they are defined, even

when the perspective shifts by different amounts at different points in

space and time. The symmetry of the equations places very tight constraints

on them. These symmetries beget forces that are carried by special particles

called bosons.

In the Standard Model, the symmetry of the equations

dictates the interactions among particles that the theory describes. For

instance, the strong nuclear force follows from the requirement that the

equations describing quarks must be the same no matter how one chooses to

define quark colors. The strong force is carried by eight particles known as

gluons. The other two forces, electromagnetism and the weak nuclear force,

are the electroweak forces and are based on a different symmetry. The

electroweak forces are carried by a quartet of particles: the photon, Z

boson, W+ boson and W– boson.

The theory of the electroweak forces

was formulated by Sheldon Glashow, Steven Weinberg and Abdus Salam. The weak

force, which is involved in radioactive beta decay, does not act on all the

quarks and leptons. Each of these particles comes in left-handed and

right-handed varieties, and the beta-decay force acts only on the

left-handed ones — a striking fact still unexplained 50 years after its

discovery.

In the initial stages of its construction, the theory had

two essential shortcomings. First, it foresaw four long-range force

particles—referred to as gauge bosons—whereas nature has but one: the

photon. The other three have a short range, less than about 10–17 meter.

According to Heisenberg’s uncertainty principle, this limited range implies

that the force particles must have a mass approaching 100 GeV. The second

shortcoming is that the family symmetry does not permit masses for the

quarks and leptons, yet these particles do have mass.

The way out

here is to recognize that a symmetry of the laws of nature can be broken.

The needed theoretical apparatus was worked out in the 1960s by Peter Higgs,

Robert Brout, François Englert and others. The inspiration came from

superconductivity, in which certain materials carry electric current with

zero resistance at low temperatures. Although the laws of electromagnetism

themselves are symmetrical, the behavior of electromagnetism within the

superconducting material is not. A photon gains mass within a

superconductor, thereby limiting the intrusion of magnetic fields into the

material.

This phenomenon is a prototype for the electroweak theory.

If space is filled with a type of superconductor that affects the weak

interaction rather than electromagnetism, it gives mass to the W and Z

bosons and limits the range of the weak interactions. This superconductor

consists of particles called Higgs bosons. The quarks and leptons also

acquire their mass through their interactions with the Higgs boson. By

obtaining mass in this way, these particles remain consistent with the

symmetry requirements of the weak force.

The paradigm of quark and

lepton constituents interacting by means of gauge bosons completely revised

our conception of matter and pointed to the possibility that the strong,

weak and electromagnetic interactions meld into one when the particles are

given very high energies. The electroweak theory shows how the quarks and

leptons might acquire masses but does not predict what those masses should

be. The electroweak theory is similarly indefinite in regard to the mass of

the Higgs boson itself. Many of the outstanding problems of particle physics

and cosmology are linked to the question of exactly how the electroweak

symmetry is broken.

Encouraged by a string of promising observations,

theorists began to take the Standard Model seriously enough to begin to

probe its limits. Toward the end of 1976 Benjamin W. Lee, Harry B. Thacker,

and I devised a thought experiment to investigate how the electroweak forces

would behave at very high energies. We imagined collisions among pairs of W,

Z and Higgs bosons. At the time of our work, not one of these particles had

been observed.

We noticed a subtle interplay among the forces

generated by these particles. Extended to very high energies, our

calculations made sense only if the mass of the Higgs boson were not too

large — the equivalent of less than 1 TeV. If the Higgs is lighter than 1

TeV, weak interactions remain feeble and the theory works reliably at all

energies. If the Higgs is heavier than 1 TeV, the weak interactions

strengthen near that energy scale and all manner of exotic particle

processes ensue. This mass threshold means that something new is to be found

when the LHC turns the thought experiment into a real one.

Experiments may already have observed the influence of the Higgs. The

uncertainty principle implies that particles such as the Higgs can exist for

moments too fleeting to be observed directly but long enough to leave a

subtle mark on particle processes. The Large Electron Positron collider at

CERN, the previous inhabitant of the tunnel now used by the LHC, detected

the work of such an unseen hand. Comparison of precise measurements with

theory strongly hints that the Higgs exists and has a mass less than about

192 GeV.

For the Higgs to weigh less than 1 TeV, as required, poses

an interesting riddle. Quantities such as mass are modified by quantum

effects. Just as the Higgs can exert a behind-the-scenes influence on other

particles, other particles can do the same to the Higgs. Those particles

come in a range of energies, and their net effect depends on where precisely

the Standard Model gives way to a deeper theory. If the model holds all the

way to 1015 GeV, where the strong and electroweak interactions appear to

unify, particles with truly titanic energies act on the Higgs and give it a

comparably high mass. So why does it appear to have a mass of no more than 1

TeV?

This tension is known as the hierarchy problem. One resolution

would be a precarious balance of additions and subtractions of the

contending contributions of different particles. Physicists are suspicious

of immensely precise cancellations that are not mandated by deeper

principles. It seems likelier that both the Higgs boson and other new

phenomena will be found with the LHC.

Theorists have explored many

ways to resolve the hierarchy problem. Supersymmetry supposes that every

particle has an as yet unseen superpartner that differs in spin. If nature

were exactly supersymmetric, the masses of particles and superpartners would

be identical, and their influences on the Higgs would cancel each other out

exactly. But in that case, physicists would have seen the superpartners by

now. So if supersymmetry exists, it must be a broken symmetry. The net

influence on the Higgs could still be acceptably small if superpartner

masses were less than about 1 TeV, within reach of the LHC.

Another

option, called technicolor, supposes that the Higgs boson is not truly a

fundamental particle but is built out of as yet unobserved constituents. If

so, the Higgs is not fundamental. Collisions at energies around 1 TeV would

allow us to look within it and thus reveal its composite nature. Like

supersymmetry, technicolor implies that the LHC will set free a veritable

menagerie of exotic particles.

One more piece of evidence points to

new phenomena on the TeV scale. The dark matter that makes up the bulk of

the material content of the universe appears to be a novel type of particle.

If this particle interacts with the strength of the weak force, then the big

bang would have produced it in the requisite numbers as long as its mass

lies between approximately 100 GeV and 1 TeV.

Opening the TeV scale

to exploration means entering a new world of experimental physics. Making a

thorough exploration of this world is the top priority for accelerator

experiments. The answers will not only be satisfying for particle physics,

they will deepen our understanding of the everyday world.

The Guardian CERN page

Guardian online, June/July 2008

Guardian

Stephen Hawking

"The Large Hadron Collider at CERN will smash particles together to

recreate the moments after the big bang. Some theories of spacetime suggest

the particle collisions might create mini black holes. If that happened, I

have proposed that these black holes would radiate particles and disappear.

If we saw this at the LHC, it would open up a new area of physics, and I

might even win a Nobel prize. But I'm not holding my breath." —

Stephen Hawking

"Cathedrals were designed to celebrate the glory

of God as manifested through the human spirit in words, music and art. The

LHC has been engineered to celebrate and proclaim the glory of the natural

world, and of our remarkable ability to comprehend it, as manifested through

experimental science." —

Lawrence Krauss

Plus articles by:

Brian Cox

A.C. Grayling

Michio Kaku

Martin Rees

and others

Guardian

Peter Higgs

Father of the 'God particle'

The Higgs boson is the particle that is

thought to give everything else in the universe mass. Its theistic nickname

was coined by Leon Lederman, but Higgs himself is no fan of the label. "I

find it embarrassing because, though I'm not a believer myself, I think it

is the kind of misuse of terminology which I think might offend some

people."

Genesis machine poised to end quest for 'God particle'

"I sincerely

hope I'm not the only one who's at least slightly worried about this mad

scientist Peter Higgs and his 'Genesis machine'" —

a reader

A Bluffer's Guide

A graphic guide to the LHC, how it will work and the physics that lies

behind it all

Da LHC

is Superduper Fly — rap video

Smashing Idea

By William Booth

Washington Post, September 11, 2008

Edited by Andy Ross

It is the biggest machine ever built. Everyone says it looks like a movie

set for a corny James Bond villain. They are correct. The machine is

attended by brainiacs wearing hard hats and running around on catwalks. They

are looking for the answer to the question: Where does everything in the

universe come from? Price tag: $8 billion plus.

The world's largest

particle accelerator is buried deep in the earth beneath herds of placid

dairy cows grazing on the Swiss-French border. The thing has been under

construction for years, like the pyramids. Its centerpiece is a circular

17-mile tunnel that contains a pipe swaddled in supermagnets refrigerated to

crazy-low temperatures, colder than deep space.

The idea is to set

two beams of protons traveling in opposite directions around the tunnel,

redlining at the speed of light, generating wicked energy that will mimic

the cataclysmic conditions at the beginning of time, then smashing into each

other in a furious re-creation of the Big Bang — this time recorded by giant

digital cameras.

Wednesday, they fired this sucker up ...

AR This is so exciting. I can hardly wait for

the results — what will the Higgs look like?

The Higgs Boson

By Anna Kucirkova, May 2018

Edited by Andy Ross

|

|

|